Re-thinking the data model in a cloud world: toward a practical approach to securing the cloud

Identity Management has come a long way in the 30 years or so since its infancy. Quite a lot of investment has gone into all sorts of standards, platforms, and applications. Still, some things take root and frame the continued direction and mindset of the industry, even once it becomes apparent that their relevance to the modern world has expired. Take the data model of X.500, a standard that appeared in the late 80’s, primarily for the telco (think “land line”) industry. When used in that way, although clunky, the data model fit pretty well, because a directory service was really just a digital version of a phone book (remember "The White Pages"?)—a lookup service. And even X.509, though it’s become the backbone of securing the Internet, enshrines the need for a root and path validation.

With the introduction of LDAP, the X.500 protocols (in particular, DAP) became much more accessible, which enabled companies like Netscape to run much faster lookups for email and other servers—but it retained the DAP data model. However, LDAP retained the X.500 data model, so it inherited problems such as:

- Top-down hierarchical information structure

- Difficult to federate

- Structured, inflexible schema that’s difficult to extend

- Centralized IT management

- Relies on identity information from a trusted source

- Abstractions like roles and groups mean enforcement points are at the connected systems

As companies started to break outside the firewall to connect with partners, customers, contractors, something needed to help glue all of these directories together—and so federation was born. Still, the X.500 data model was largely preserved. (On a side note, I called out all of these problems in a 2001 paper I wrote at Burton Group called “Beyond LDAP”; it was said that I had “killed LDAP.” We all got some laughs out of it, but I just answered, “No, I’m merely the coroner.” It’s probably still on Gartner’s site somewhere!)

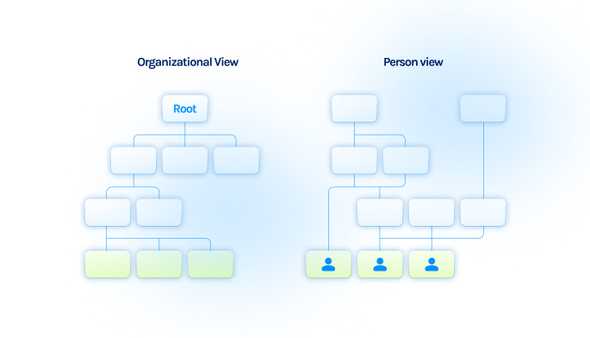

Sure, the centralized model is convenient for some, but the world just isn’t that way. For one, it’s much more matrixed now than ever. In the diagram below, the left side of the picture shows how organizations often want to run security—which also represents the assumptions that went into building all the tooling for them. The right side of the picture shows a more realistic user-centric based view, where users have a whole range of services, identities, and needs, which all change dynamically.

Given the rigidity and inflexibility of these early-era identity services, it’s actually rather impressive that these models could withstand a direct assault from the internet’s model of chaotic expansion, à la REST.

Take 2

Newer approaches such as OAuth and OpenID Connect have greatly improved the ability to authenticate and authorize across distributed services; but these standards still run into compatibility issues with custom scopes, similar to LDAP’s issues with custom attributes.

In short, the industry hasn't produced a panacea for access problems and a general purpose solution isn’t in sight. The major cloud platforms have already adopted—and must continue to support—their own approaches to access and these will each continue to diverge (for a primer on their existing differences, have a look here). Even where there is a standard in place, such as RBAC, vendors’ interpretations of these terms differ widely. GitHub uses a combination of organizations, teams, and roles for repository access management. Ops tools such as VPNs and proxies have custom solutions for managing access. So, if anything, the access industry is getting more complex as it grows.

A critical design principle at Trustle is to connect to each cloud system natively to update each system in its own vernacular. This enables organizations to distribute ownership of resources and removes arcane dependencies across systems. Trustle is also engineered for distributed ownership of resources and distributed administration of access, as well as any-to-any relationships to user accounts on target systems. What makes this kind of pragmatic approach possible is the ease of deployment as a SaaS solution, the use of AI & ML to produce recommendations, and the ability to automate through the use of native APIs. We’re also focused on cloud platforms and operations tooling—personal productivity applications generally are fine with existing SSO tools. But when it comes to platforms like AWS, Azure, GCP, and GitHub, we feel our “go native” approach is the only viable solution.