If the agent can act in production, someone needs to own the keys

Most companies didn’t set out to create a new class of privileged super-user with unclear ownership, patchy controls, and a default habit of borrowing someone else’s access. They just wanted a helpful AI agent to fetch data, open tickets, write code, chase workflows, or prod a cloud API into doing something useful.

And yet, here we are, livin’ in the future, where agents are operating across production environments and AI access controls are still catching up, held together by inherited and potentially broad permissions, long-lived credentials, and a smattering of wishful thinking. Or they might be, who knows, because we can’t manage where we lack visibility.

And whether it’s been formally agreed or not, AI access has already landed on the desk of security teams. Not because they built the agents, but because they own identity, permissions, auditability, and incident response when things decide to go crazy.

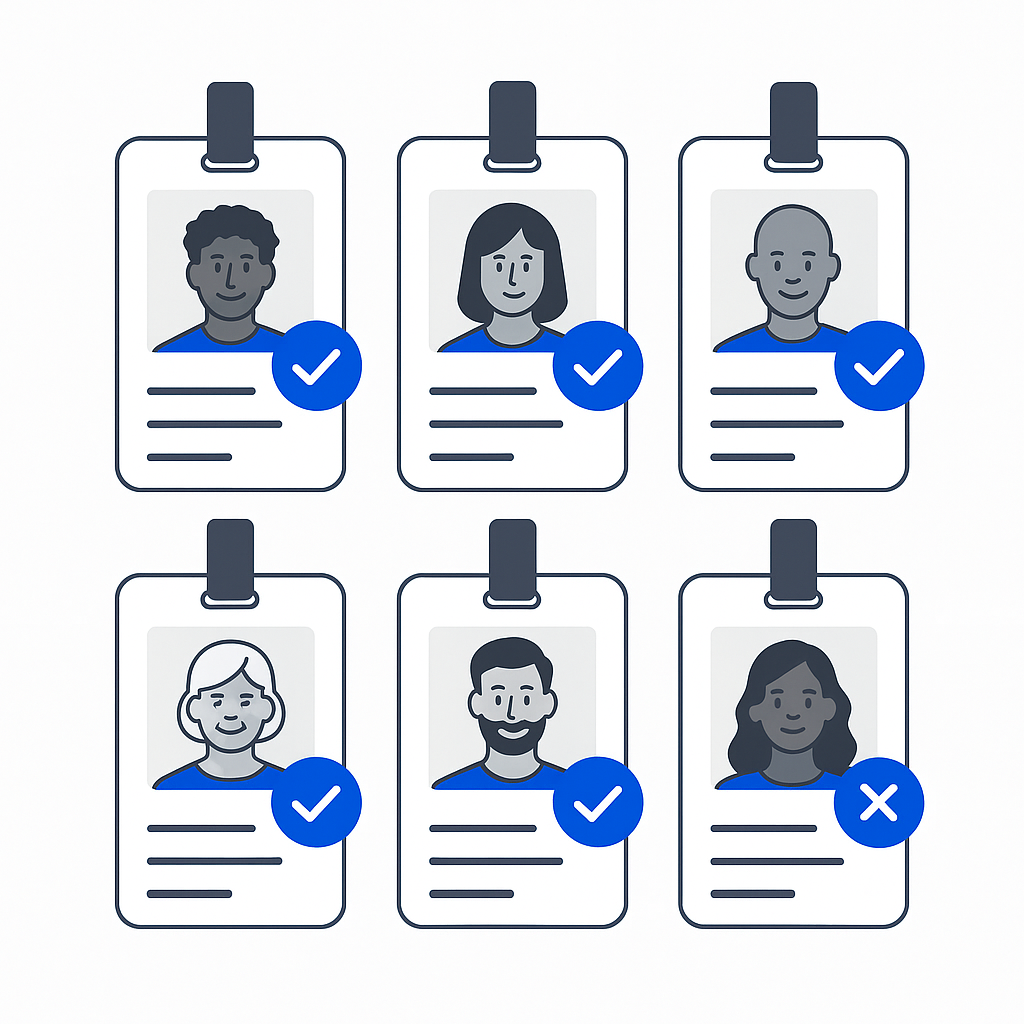

The scale of the shift is, without exaggeration, biblical. The Cloud Security Alliance (CSA) reports that 85% of organizations already use AI agents in production, with 73% expecting them to become critical within 12 months. Yet 68% can’t clearly distinguish between actions taken by humans and those taken by agents. That’s not just a logging problem. It’s an AI access problem.

If we can’t tell who (or what) did something, and if we can’t see the agents, we can’t govern them, constrain them, or prove it where an AI audit has now become a part of international cybersecurity standards compliance.

AI Access is Expanding Faster Than Identity Controls

From a technical perspective, AI agents are (on the surface at least) another form of non-human identity. They authenticate, request access, call APIs, and interact with infrastructure. The difference is speed, scale, and unpredictability.

“Complex systems are inherently unpredictable.”

- John H. Holland, American scientist and pioneer of complexity theory.

The difference between a user’s intent (“pull last quarter’s revenue report”) and actual AI actions (“query multiple data stores, generate temporary credentials, access a broader dataset, and trigger downstream workflows” - yes, extreme, but possible) can be unexpected and difficult to constrain, especially when those actions are executed across systems, gifted by users with good but arguably misguided intentions, with inherited permissions and limited visibility.

Agents are already embedded across core systems:

- 56% interact with internal apps or APIs.

- 49% access SaaS platforms.

- 44% touch cloud infrastructure.

- Over a third operate in CI/CD pipelines and data platforms.

This creates what NIST is now formalizing as a first-class identity challenge. Its 2026 NCCoE guidance makes clear that organizations must apply standard identity controls, identification, authentication, authorization, and auditing, directly to AI agents, not treat them as an edge case.

The problem is that most organizations haven’t caught up.

AI access is invariably bolted onto existing identity models rather than designed properly. And that leads to the same patterns security teams have been wearily fighting for years, but with more automation and less visibility.

When AI Access Comes From Convenience

The biggest issue isn’t that agents have access. It’s how they get it.

According to the CSA:

- 43% of organizations use shared service accounts for agents.

- 31% allow agents to operate under human identities.

- 74% say agents often receive more access than necessary.

- 79% say agents create access paths that are difficult to monitor.

This is inherited access. Not engineered access.

Instead of defining what an agent needs to do and granting only that, many organizations are letting agents borrow permissions from existing identities, automation logic, or whoever clicked “run”.

OWASP’s guidance says that agents should be restricted to the minimum tools required, with permissions scoped per tool and explicit authorization for sensitive operations. Anything broader introduces unnecessary risk and expands the blast radius.

In practice, though, convenience wins. And convenience tends to look like over-permission.

AI Access Without Lifecycle Control

Even when AI access is granted deliberately, it often lacks a proper lifecycle.

Credentials persist. Permissions linger. Agents continue operating long after their original purpose has changed or disappeared.

This directly conflicts with guidance from every major cloud provider:

- AWS recommends using temporary credentials and avoiding long-lived access wherever possible.

- Google advises minimizing and rotating service account keys, disabling unused credentials, and favoring short-lived identity federation.

- And GitHub highlights the security benefits of short-lived tokens and eliminating stored secrets in automation workflows.

The solution is actually simple: reduce standing privilege, shorten credential lifetimes, and make access ephemeral. But most AI access today is anything but ephemeral. It’s sticky. And sticky access becomes invisible over time.

What it Actually Means to own AI Access

If security teams are going to carry responsibility for AI access, then the operating model has to reflect reality. Not more tickets. Not more manual approvals. Not more spreadsheets of service accounts that nobody fully trusts. The foundation is straightforward, even if execution isn’t.

- AI agents need to exist as distinct identities, not shared accounts or borrowed users. Without that, attribution and control fall apart immediately.

- Access must be scoped to specific tools and resources, so that each action is bound to a defined purpose. Broad, inherited access has no place in agent-driven workflows.

- Permissions should be granted just in time, aligned to the task or workflow, and expire automatically when that task completes. This removes standing privilege and reduces exposure without slowing teams down.

- Security teams need clear visibility into AI access, so they can see what agents can do, what they are doing, and where access has drifted.

- And critically, access must be revocable and automatically deprovisioned, so that when agents, workflows, or integrations change, privileges don’t linger unseen in the background.

These aren’t new ideas. They are the same principles behind least privilege, zero standing access, and modern identity first access. What’s changed is the speed and scale at which they now operate.

Making AI Access Work Without Slowing Everything Down

Security needs control. Engineering needs speed. AI agents amplify both. The way to control isn't a heavier process. It’s better integration between identity and workflow. In practice, that means treating AI access as part of the same system that governs human and machine identities:

- Discovering agent identities and their entitlements across cloud and SaaS environments.

- Routing access requests through tools teams already use, like Slack or Microsoft Teams.

- Applying policy to determine when access is automatically granted versus reviewed.

- Issuing short-lived, scoped permissions tied to specific tasks.

- Logging every action centrally to create a clear audit trail.

- Running access reviews that include both human and non-human identities.

This shifts AI access from something reactive to something designed. From something opaque to something measurable. And from something risky to something governable.

AI Access isn't a New Risk. It’s Old Risk, Accelerated

There’s a tendency to treat AI as a new security domain with its own playbook. It isn’t. At its core, AI access is an identity problem: who has access, why, how much, for how long, and whether it can be proven and revoked.

What’s changed is speed. Agents inherit permissions faster, operate across more systems, and fail at machine scale. So when people ask, “Who owns AI access?”, the honest answer is simple: security already does.

The real question is whether they have the visibility, control, and automation to manage it before it manages them. AI access won’t be secured by better intentions or policy decks, but by making agents legible to IAM: owned, identifiable, scoped, short-lived, and removable.

Everything else is just garnish.

When someone asks, “Who in your company owns AI access?” the answer should be clear, visible, and provable, because if agents can act in our environment, ownership isn’t optional. It’s the difference between controlled access vs. controlled damage, and (right now) the buck stops with security teams.

If AI access is now part of our identity problem, and statistically it is, it should be governed like one. Start a Trustle free trial and see every agent, entitlement, and access path across your multi-cloud environments in as little as 30 minutes.