AI service attacks aren’t a new genre of breach. They’re the newest link in the attack chain

Cloud attack chains were once tidy (by criminal standards): pop an endpoint, steal a token, grab a key, pivot to the control plane, exfiltrate data, maybe drop some ransomware for a dramatic exit.

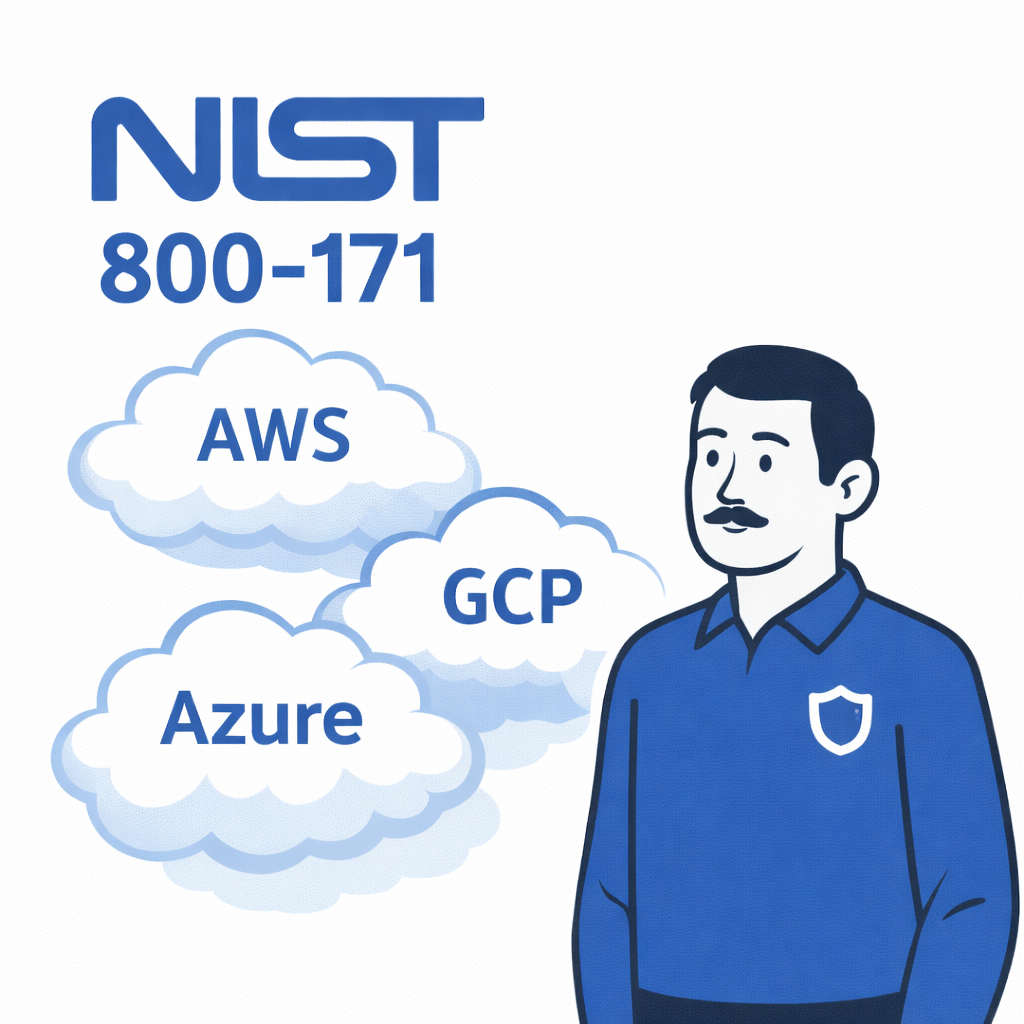

Now attackers are doing something a little more effective. They’re diversifying the services they target across AWS, GCP, and Azure, and increasingly routing the “business value” part of the chain through AI-powered services: copilots, chat interfaces, agent workflows, retrieval systems, and model APIs.

When Service-Plane Hopping Becomes Normal

Multi-cloud environments already have a problem: we don’t have one control plane, we have three (at minimum), plus SaaS. Add AI services and we’ve effectively introduced a new non-human identity “privileged layer” that sits on top of identity, data, and automation.

Threat actors like this for two main reasons:

- AI services are wired into business data by design.

RAG systems, copilots, connectors, and “chat with our docs” features are not edge systems. They’re data-adjacent and frequently permissioned. - AI systems are confusable deputies.

The UK NCSC states that prompt injection isn’t SQL injection, and the analogy can lull teams into the wrong mitigations. Their core point is that the failure modes are different, often worse, because the system is built to follow instructions, and those instructions can be smuggled in via content.

This is the CISO governance moment: if we treat AI as a “feature”, we’ll bolt it onto existing controls and call it enablement. If we treat it as a new access pathway, we’ll govern it like a Tier-0 system.

Why This Is Showing Up in Real Incidents

A useful reality check: our workforce is already using GenAI whether we sanctioned it or not.

Verizon’s 2025 DBIR Executive Summary reports that a meaningful slice of employees are using GenAI services on corporate devices, and, more importantly, many of them do so using non-corporate emails (72%) or corporate emails without integrated authentication (17%). That’s shadow AI plus identity drift, wrapped in a compliance headache.

AI service attacks thrive on two things: access and ambiguity.

Example 1: Copilot-Style Prompt Injection That Exfiltrates Data

The EchoLeak case study (CVE-2025-32711) is a clean “this is not theoretical” anchor: a zero-click prompt injection in Microsoft 365 Copilot that enabled data exfiltration via a crafted email, chaining multiple bypasses across content handling.

We don’t need to love Copilot to learn from it. The lesson is architectural:

- “Untrusted content” (email, documents, tickets) can carry instructions.

- The LLM can cross trust boundaries on the user’s behalf.

- Output can be routed into channels that were never designed as secure egress paths.

Example 2: Stolen Keys Turn AI Into a Billing and Abuse Platform

Microsoft’s Digital Defense Report describes Storm-2139 exploiting stolen API keys to bypass AI governance and abuse popular AI services, including Azure OpenAI.

Again, the lesson isn’t “Microsoft bad”, it’s “keys are still keys”. If our organization can’t reliably govern non-human credentials across AWS/GCP/Azure, the model endpoint is just another expensive place to be wrong.

Example 3: When The Target Isn’t The Shell, It’s The AI Assistant

In late February 2026, a threat actor was spotted offering root shell access to a CEO’s laptop at a UK-based automation company for roughly $25,000 in cryptocurrency. At first glance, that sounds like a standard access broker listing, another compromised executive endpoint for sale via dark web services, but the real prize wasn’t the shell.

This listing showcases something far more insidious and a portent of things to come. The CEO was running OpenClaw, the AI-powered personal assistant tied directly into business systems. Inside that environment were personal conversations, company databases, Telegram bot tokens, and Trading 212 API keys, essentially a live operational dashboard of the executive’s digital life.

The attacker wasn’t just selling a compromised machine. They were selling an AI interface into the company’s data and automation layer.

The Technical Pattern we Should Care About

If we want a practical taxonomy for boards and architects, the OWASP Top 10 for LLM Applications gives us the map: prompt injection, insecure output handling, excessive agency (tool calling), supply chain risks, denial of service/cost spikes, and sensitive info disclosure.

NIST’s AI Risk Management Framework explicitly calls out indirect prompt injection: attackers can inject prompts into data that the model later retrieves, without needing direct access to the chat interface.

In the parlance of governance:

- Our AI layer is not one system; it’s a pipeline

(identity → retrieval → model → tools → outputs). - Every connector is a data plane extension.

- Every tool/action the agent can take is a privileged decision.

What Safe Enablement Looks Like in Multi-Cloud

This is how we make AI adoption fast without letting it become a fourth cloud we forgot to secure.

1) Make Corporate Identity Non-Negotiable

Kill the “personal account” problem decisively.

- SSO-only access to approved GenAI tools

- Conditional access (device posture, geo, risk)

- Separate identities for humans vs agent/workload identities

- Short-lived credentials for model/API access; rotate keys like we mean it

This is also how we reduce friction: developers will use the approved path if it’s the easiest path.

2) Treat Tool Calling Like Production Automation

If an agent can call tools, it’s automation with a personality.

- Allowlist tools (what can be called)

- Scope permissions per tool (read/search ≠ export ≠ admin)

- Step-up approvals for high-impact actions (export/share externally/create tokens)

- Default-deny for external egress from AI workloads unless explicitly required

This is the heart of AI service attacks: attackers don’t “hack the model”, they coerce the system into doing things it was allowed to do.

3) Engineer For Containment, Not Perfection

NCSC’s framing is useful here: don’t promise we’ll “solve prompt injection”; design so prompt injection can’t become organizational catastrophe.

Practical containment patterns:

- Discover AI and non-human identities automatically. Maps service principals, API keys, and agent accounts across AWS, GCP, and Azure so teams can see exactly what identities AI services are running under.

- Enforce just-in-time access for powerful roles. Embrace zero-standing privileges by granting sensitive permissions only when workflows require them.

- Expose those excessive permissions and hidden escalation paths that could amplify AI service attacks.

- Create a clear access audit trail. Built-in access reviews and entitlement visibility show who, or what, had access to which systems and when, by default.

- Separate “instructions” from “retrieved content” at the application layer.

- Provenance tagging (what came from where).

- Output controls for sensitive data classes.

4) Add An “AI Audit Trail” our SOC Can Actually Use

If the SOC can’t see it, it didn’t happen (and it will happen again).

Log and alert on:

- Model spend spikes / token anomalies (cost DoS and abuse)

- Abnormal connector reads (bulk scraping patterns)

- High-risk tool calls outside normal working patterns

- New API key creation + immediate use

We Don’t Have The Headcount For This

ISC2’s 2024 workforce study reports 67% of respondents have a staffing shortage.

As a result, our operating model has to be “secure by default” and “easy by design”, because our team does not have spare cycles to hand-hold every new AI workflow.

The goal of governance isn’t to slow the business down. It’s to stop every product team from inventing their own security model for AI at 2 a.m., in three clouds, with one overworked IAM engineer and a Jira ticket called “LLM stuff”.

That’s how AI service attacks become our next incident report.

If AI assistants and copilots now sit on top of your data and automation stack, they also sit on top of your identity problem. Make the agent blind spot go away with our free trial. and in about 30 minutes you’ll see every entitlement across multi-cloud and SaaS environments, including service principals, agent identities, API keys, and other non-human accounts. From there we can enforce least privilege, just-in-time access, review risky access paths, and make sure our AI services aren’t operating with more power than they should.